CHCI Participates in 2021 IEEE VR Conference

CHCI students are participating in the IEEE VR Conference, held virtually from March 27th through April 3rd, 2021. IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR) is a premiere international event for the presentation of research results in the broad areas of virtual, augmented, and mixed reality (VR/AR/XR). IEEE VR presents groundbreaking research and accomplishments by virtual reality pioneers: scientists, engineers, designers, and artists, paving the way for the future, including augmented, mixed, and other forms of mediated reality.

Presentations

Lei Zhang (CS PhD candidate)

IEEE VR 3DUI Contest 2021

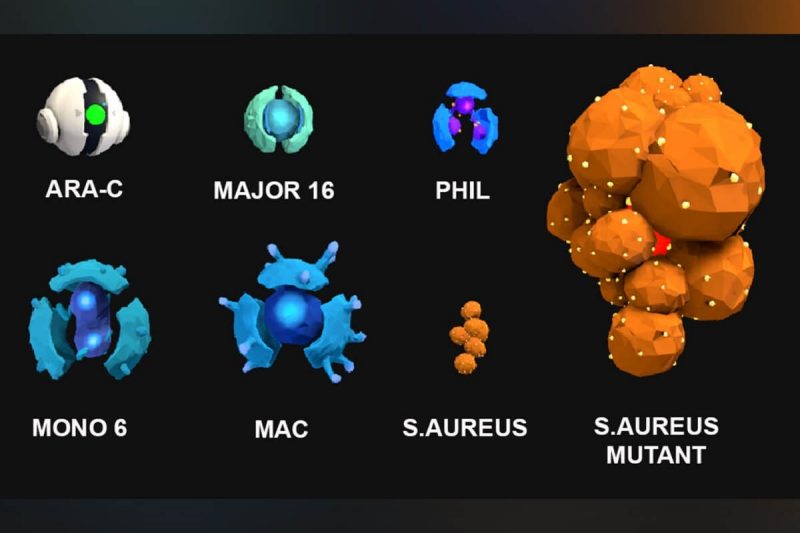

“Fantastic Voyage 2021: Using Interactive VR Storytelling to Explain Targeted COVID-19 Vaccine Delivery to Antigen-presenting Cells”

Science storytelling is an effective way to turn abstract scientific concepts into easy-to-understand narratives. In the current context of the COVID-19 pandemic, we developed an interactive storytelling experience in immersive virtual reality (VR) to promote science education for the general public on the topic of COVID-19 vaccination. The educational VR storytelling experience uses sci-fi storytelling, adventure and VR gameplay to illustrate how COVID-19 vaccines work with the immune system in the human body to fight a future infection from the real virus.

Lei Zhang (CS PhD candidate)

IEEE VR 6th Annual Workshop on K-12+ Embodied Learning through Virtual and Augmented Reality (KELVAR)

“Designing immersive virtual reality stories with rich characters and high interactivity to promote learning of complex immunology concepts”

To promote students’ understanding of complex immunology concepts at college level and increase their learning motivation and engagement, we leveraged the strengths of immersive storytelling and virtual reality (VR) interactivity and created a guided interactive and immersive VR storytelling experience with rich character setup and engaging narratives. The experience takes students on an exciting journey inside the human body by embodying a specific type of white blood cell (neutrophil) and fighting pathogens.

Research Papers

Lee Lisle (CS PhD candidate)

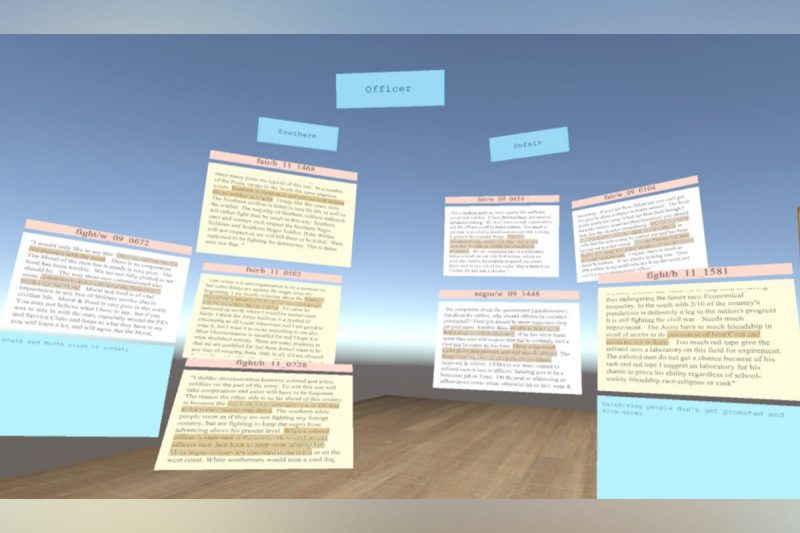

"Sensemaking Strategies with Immersive Space to Think."

The process of sensemaking involves foraging through and extracting information from large sets of documents, and it can be a cognitively intensive task. A recent approach, the Immersive Space to Think (IST), allows analysts to browse, read, mark up documents, and use immersive 3D space to organize and label collections of documents. In this study, we observed seventeen novice analysts perform a historical analysis task in order to understand how users utilize the features of IST to extract meaning from large text-based datasets. We found three different layout strategies they employed to create meaning with the documents we provided. We further found patterns of interaction and organization that can inform future improvements to the IST approach.

Feiyu Lu (CS PhD candidate)

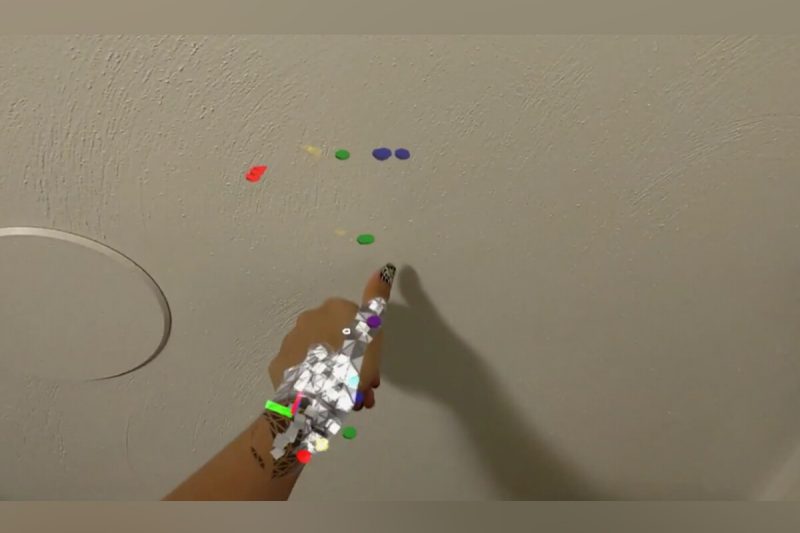

“Evaluating the Potential of Glanceable AR Interfaces for Authentic Everyday Uses”

In the near future, augmented reality (AR) glasses are envisioned to become the next-generation personal computing platform. However, it remains unclear how we could enable unobtrusive and easy information access in AR without distracting users, while being acceptable to use at the same time. To address this question, we implemented two prototypes based on the Glanceable AR paradigm, a promising way of managing and acquiring information through glancing at the periphery of AR head-worn displays. We conducted two separate studies to evaluate our designs. We found that users appreciated the Glanceable AR approach in authentic everyday use cases. They found it less distracting or intrusive than existing devices, and would like to use the interface on a daily basis if the form factor of the AR headset was more like eyeglasses.

Leonardo Pavanatto Soares (CS PhD candidate)

“Do we still need physical monitors? An evaluation of the usability of AR virtual monitors for productivity work”

Physical monitors require space, lack flexibility, and can become expensive and less portable in large setups. Virtual monitors can be subject to technological limitations such as lower resolution and field of view. We investigate the impacts of using virtual monitors on a current state-of-the-art augmented reality headset for conducting productivity work. We conducted a user study that compared physical monitors, virtual monitors, and a hybrid combination of both in terms of performance, accuracy, comfort, focus, preference, and confidence. Results show that virtual monitors are a feasible approach, albeit with inferior usability and performance, while hybrid was a middle ground.

Posters

Anjali Spra (CS)

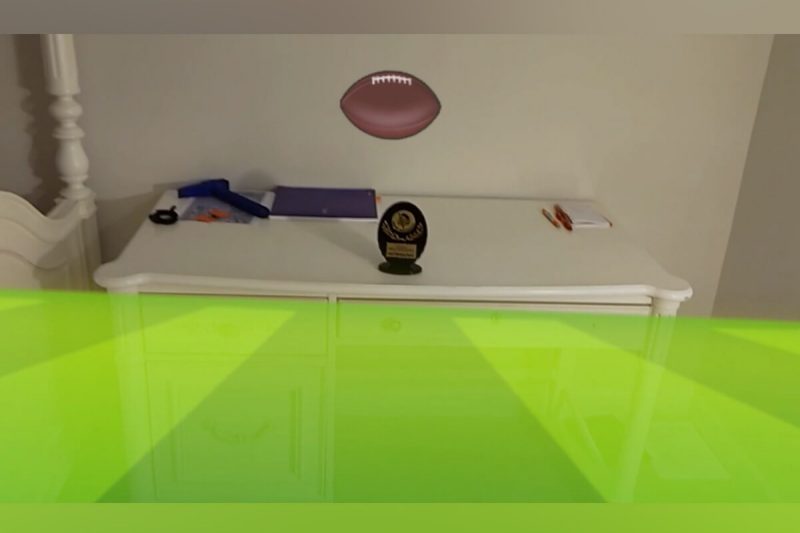

“Leveraging AR and Object Interactions for Emotional Support Interfaces”

This work explores ways we can leverage augmented reality systems to create meaningful and emotional interactions with physical objects. We explore how hand and eye interactions can be used to trigger meaningful sensory feedback to the user. These investigations show how AR interfaces can interpret the actions of a user to shift the primary purpose of a physical object for emotional support. We specifically focus on capturing the intent of a user with their old HS cheerleading trophy and providing visual and auditory feedback to increase the sense of connectedness to the user's past cherished memories.

Yuan Li (CS PhD candidate)

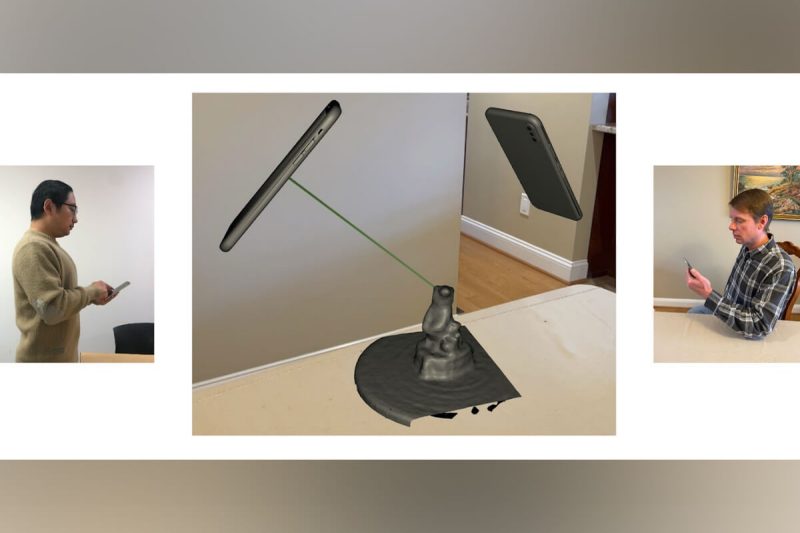

“ARCritique: Supporting Remote Design Critique of Physical Artifacts through Collaborative Augmented Reality”

Design critique sessions require students and instructors to jointly view and discuss physical artifacts. However, in remote learning scenarios, available tools (such as videoconferencing) are insufficient due to ineffective, inefficient communication of spatial information. This paper presents ARCritique, a mobile augmented reality application that combines KinectFusion and ARKit to allow users to 1) scan artifacts and share the resulting 3D models, 2) view the model simultaneously in a shared virtual environment from remote physical locations, and 3) point to and draw on the model to aid communication. A preliminary evaluation of ARCritique revealed great potential for supporting remote design education.

Nanlin Sun (CS MA student)

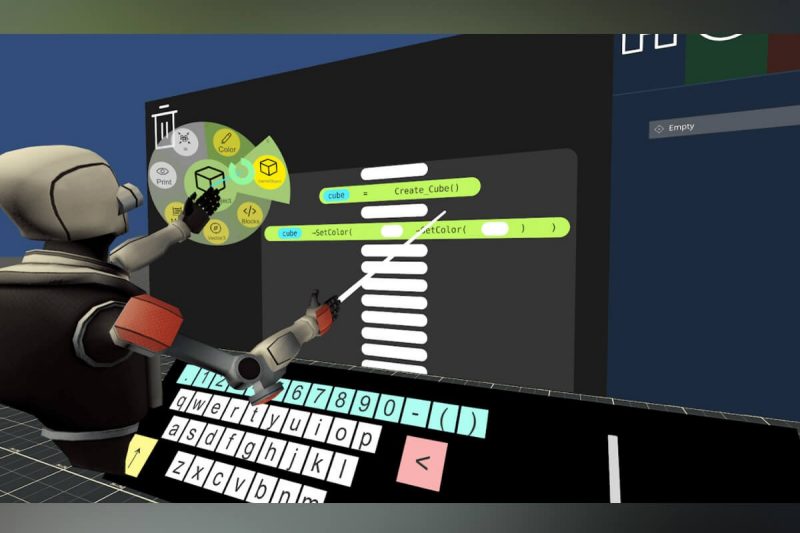

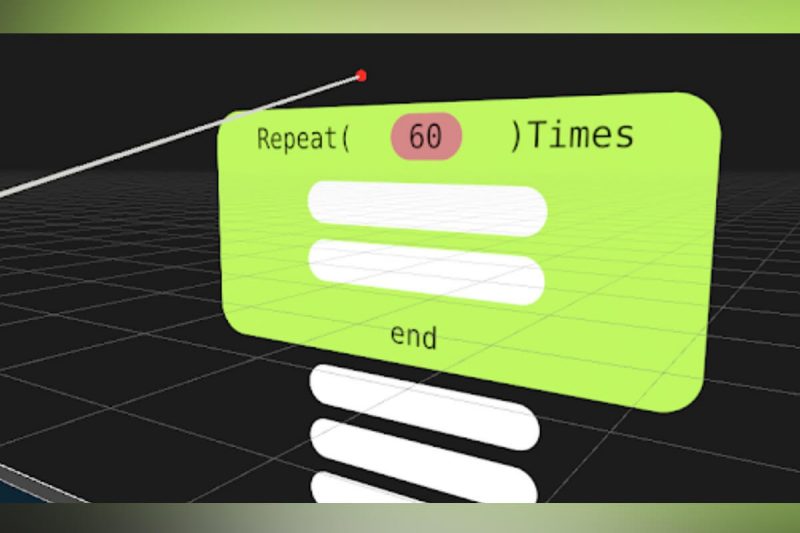

“Programmable Virtual Reality Environments”

We present a programmable virtual environment that allows users to create and manipulate 3D objects via code while inside virtual reality. Our environment supports the control of 3D transforms, physical and visual properties. Programming is done by means of a custom visual block-language that is translated into Lua language scripts. We believed that the direction of this project will benefit computer science education in helping students to learn programming and spatial thinking more efficiently.