Research talks by Sukrit Venkatagiri and Dylan Losey on October 15th

October 11, 2021

Please join us at the SI and IE research meetings for talks by Dylan Losey and Sukrit Venkatagiri on Friday, October 15.

Research group meetings are held in a hybrid form. Physical location: ICAT Observation Room (room 251) in the Moss Arts Center. Zoom links for events are available by subscribing to the CHCI calendar.

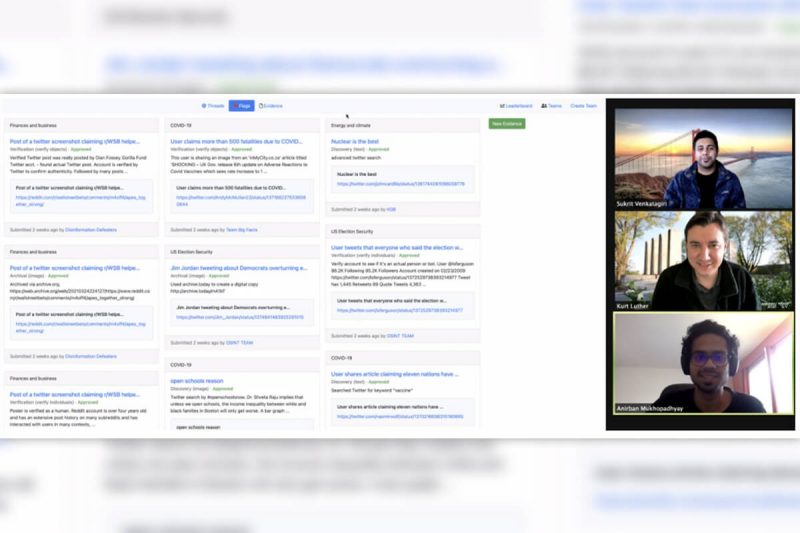

Sukrit Venkatagiri (PhD Student, Computer Science) will speak at the Social Informatics meeting this Friday, October 15th, at 10 am. Sukrit will present his CSCW paper, “CrowdSolve: Managing Tensions in an Expert-Led Crowdsourced Investigation”, which he co-authored with Aakash Gautam (VT CS and CHCI Alum) and Kurt Luther (Associate Professor, Computer Science.)

Abstract

Investigators in fields such as journalism and law enforcement have long sought the public’s help with investigations. New technologies have also allowed ameteur slueths to learn their own crowd sourced investigations -- that have traditionally only been the purview of expert investigators -- with mixed results. Through an ethnographic study of a four-day, co-located event with over 250 attendees, we examine the human infrastructure responsible for enabling the success of an expert-led crowdsourced investigation. We find that the experts enabled attendees to generate useful leads; the attendees formed a community around the event; and the victims’ families felt supported. Additionally, the co-located setting, legal structures, and emergent social norms impacted collaborative work practice. We also surface three important tensions to consider in future investigations and provide design recommendations to manage these tensions.

Dylan Losey will present, "Interactive, Inclusive, and Revealing Robot Learners” at the Immersive Experiences meeting this Friday 10/15 at 1 pm. Dylan Losey, a new member of CHCI, is an assistant professor in Mechanical Engineering at Virginia Tech. His research interests lie at the intersection of human-robot interaction, learning, and control.

Abstract

Our society is rapidly developing interactive robots that collaborate with humans, e.g., self-driving cars near pedestrians, surgical devices with doctors, and assistive arms for disabled adults. The humans who interact with these systems will not always be experts in robotics: so how should we facilitate mutual understanding between everyday users and learning agents? My talk will examine this question from two perspectives: the robot’s and the human’s. From the robot’s point-of-view, I will formalize inclusive algorithms that learn what the human really meant rather than what the human actually demonstrated. From the human’s point-of-view, I will leverage active feedback and cognitive models to reveal what the robot has learned and when the robot is uncertain. Viewed together, these perspectives enable emergent and co-adaptive interaction between humans and their robot partners.