Wallace Santos Lages and Chris North Receive CHCI Grant for Immersive Analytics project

November 30, 2021

Wallace Santos Lages (SOVA) and Chris North (CS), in collaboration with colleagues at the University of New Mexico (Melanie Moses, CS, Biology; Tobias Fisher, Earth and Planetary Science; Matthew Fricke, CS; and John Ericksen, CS PhD student) received CHCI planning grant funding from May through July 2021 and work continues. The research project, “An Immersive Analytics Framework for Drone-Collected Environmental Data'' is designing a new immersive analytics framework that integrates data analysis and control of robotic data acquisition systems. CHCI planning grants fund projects leading to large-scale externally funded research efforts. The goal of the grant program is to identify and support interdisciplinary teams of researchers in convergent research areas to prepare for submission of large external proposals.

The Lages-North grant allowed investigators to begin the design and prototyping of a mixed reality application for real-time data analysis of environmental data using the Microsoft HoloLens. This prototype has been used to help refine the research questions, approach, and as a preliminary result to support a collaborative proposal to the NSF, National Robotics Initiative 3.0: Innovations in Integration of Robotics (NRI-3.0). The NRI-3.0 supports research that promotes integration of robots to the benefit of humans including human safety and human independence, focusing on research in the innovative integration of robotic technologies. NSF supported projects will range from $250,000 to $1,500,000 for a period of up to four years.

Small-scale, remote-controlled quadcopters, also known as drones, have filled a critical gap between surface sensors and airplane/satellite systems. Their small size and maneuverability allow them to be operated at low altitudes, overpopulated areas, and transported easily. When fitted with sensors, they become an excellent platform for collecting geolocated data, offering higher spatial resolutions than ground- or space-based equivalents. Since they have low cost and high reliability, they can also be deployed in groups (swarms) to cover large areas efficiently.

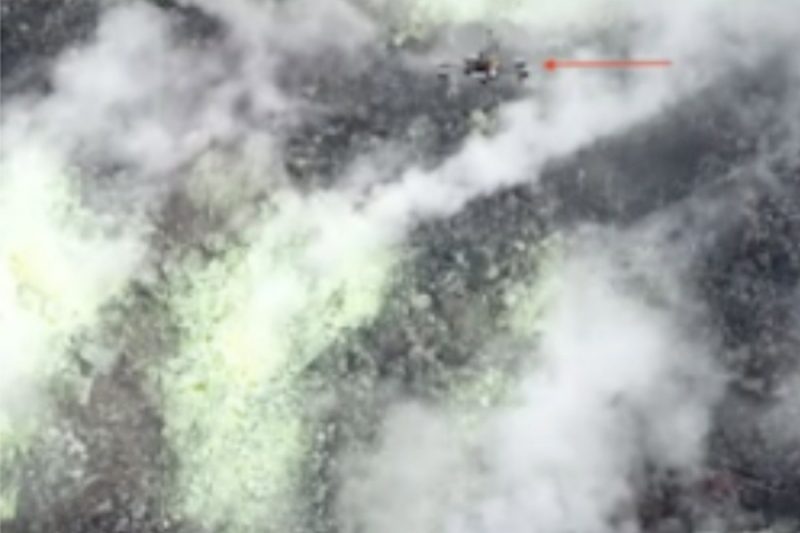

UNM collaborator Moses' pioneering work on monitoring CO2 plumes from volcanoes is one example of drone use in data collection. More than 10% of the world’s population live in the destructive zone of volcanoes, and a quarter of a million people have perished in volcanic eruptions in the last 500 years. A key task for volcano surveillance is to locate the maximum CO2 flux in a dynamic gas plume. Researchers have demonstrated that changes in volcanic gas emission in some cases provide early warning, in the range of hours to days, in advance of hazardous volcanic activity. However, only 10 of the approximately 300 currently active volcanoes are characterized by long term datasets that enable any assessment of temporal CO2 variability. Satellite remote sensing of CO2 is infeasible, so sampling is currently performed by ground-based sensors or aerial surveys with piloted aircraft. These techniques are costly, dangerous, and produce temporally and spatially coarse measurements.

Drones present an emerging solution that reduces risk to volcanologists and has the potential to markedly increase sampling resolution within volcano plumes. As part of an international team of research universities, the Volcan project research team recently demonstrated that drones can feasibly sample CO2 from an active volcano in Papua New Guinea. However, drone loss was very common. Sudden and violent thermal updraughts, acidic plumes, and rugged cliffs were some of the many conditions that destroyed drones. In addition, flight times of quadcopters are short. Even the drones developed by the researchers specifically for the mentioned survey are limited to one hour. This gives scientists a limited amount of time to analyze the data and decide about any changes in the survey patterns.

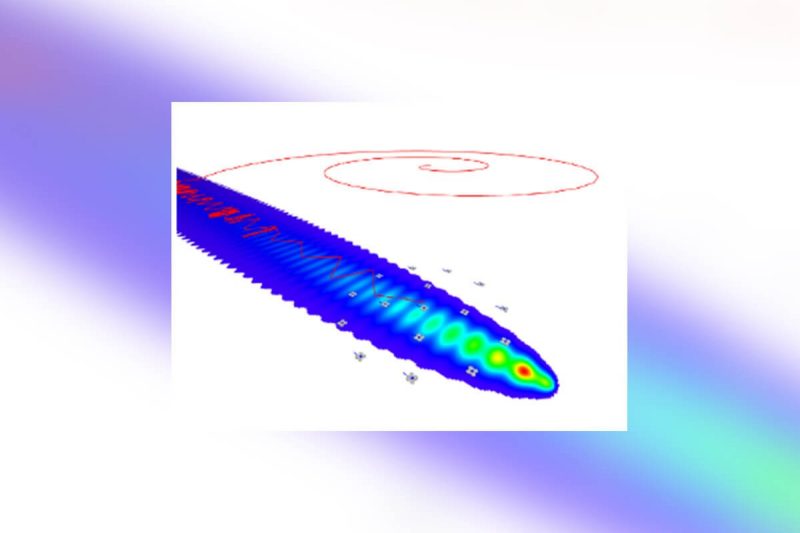

The researchers have developed an analysis tool that will allow volcano scientists to quickly integrate their domain knowledge with real-time data sensed by the swarm. It will also feature an intuitive interface for fast, in-loco analysis and guiding the swarm towards the most promising monitoring locations. Tools for quick data analysis and real-time decision-making are also critical for many other surveying applications. For example, during natural disasters and other events, where lack of information may put human lives at risk, quick assessment of the situation is paramount.

The research team will support fast analytical reasoning by using mixed reality technologies to allow users to naturally externalize and offload their cognitive processes into spatially organized visual structures. This approach, known as immersive analytics, is uniquely suited for the analysis of data coming from drones (such as scalar fields, vector fields), due to their inherently 3D nature. Two-dimensional data (e.g., photos, terrain data) can also be incorporated. For low level control, researchers will use algorithms that are responsive to the input from scientists and information gathered by the swarm in real time. One example is LoCUS, a bio-inspired algorithm researchers developed for volcano drone survey. It can maintain a spatially dispersed swarm of drones and automatically replace failed drones by others nearby, while keeping the symmetry essential to efficient gradient following.

The goal of this research is to enable a new class of immersive analytics frameworks that integrates data analysis and control of robotic data acquisition systems. Researchers will leverage the benefits of mixed reality interfaces (augmented and virtual reality) to close the loop between domain experts and autonomous collection systems. Their first step was to design and prototype a visualization and analysis tool for volcano CO2 plume survey data, obtained by aerial drone swarms. The final system will go beyond interactive immersive visualization and will allow analysts to generate search strategies to control multi-robot data acquisition systems in real time.

The Research Team:

Wallace Lages is an assistant professor in the VT School of Visual Arts where he leads the Reality Design Studio. His research focuses on mixed reality interaction design, with applications on everyday augmented reality use and immersive experiences. The research topics include the investigation of perception and cognition in mixed reality, design of augmented reality interfaces, and interaction in immersive games. @wallacelages

Chris North is a professor in the VT Department of Computer Science. He is the associate director of the Sanghani Center and leads the Visual Analytics research group. His research seeks to enable people to interactively visualize and explore big data for discovering new insights, by establishing usable, effective, and scientifically grounded methods for visual interaction. His current research themes focus on creating powerful interactions for computational analytics that respond to human cognitive sense making activity and exploiting large high-resolution displays to create rich embodied-interactive spaces.

Luke McCormick is a VT Computer Science Masters student who worked on the development of a HoloLens prototype to display the CO2 data collected by the VOLCAN team. McCormick’s work was funded by the CHCI grant in the summer of 2021.

The project partners from the University of New Mexico (UNM) are part of the VOLCAN project, an NSF sponsored research effort to develop novel bio-inspired software and drones to measure and sample volcanic gases. The UNM team is funded by NSF NRI: INT: Adaptive Bio-inspired Co-Robot algorithms for volcano monitoring.

Melanie Moses is a professor in the UNM Department of Computer Science, with a secondary appointment in the UNM Department of Biology, and an External Faculty of the Santa Fe Institute. Moses Biological Computation Lab studies complex biological and information systems, the scaling properties of networks, and the general rules governing the acquisition of energy and information in complex adaptive systems. Moses develops computational and mathematical models of biological systems and applies the knowledge in biologically inspired computation (particularly swarm robotics).

Tobias Fisher, Professor of Earth and Planetary Science. Tobias Fischer is a volcanologist and geochemist who works on fluids discharging from active volcanoes and hydrothermal systems. He uses stable isotopes of C, N, S, Cl, H, and O to constrain the sources of volatiles. He and his students also measure diffuse degassing in tectonically active areas such as the East African Rift. Current field areas are volcanoes in the Aleutians, Central America, the East African Rift (Kenya, Tanzania, Ethiopia) and Antarctica.

Matthew Fricke, Research Assistant Professor of Computer Science. Matthew Fricke studies distributed complex systems including supercomputing, machine learning, swarm robotics, and biological systems. His computational biology research focuses on the efficiency of search processes such as ant foraging and immune system activation. Swarm robotics work is on search strategies for resource collection in support of solar system exploration and volcano surveys. Recently he has applied machine learning to modeling climate change and to biosignature detection.

John Ericksen, CS PhD student. John Ericksen is a software developer at Honeywell and computer science Ph.D. student at the University of New Mexico with the Moses Biological Computation Lab. His current research applies cutting edge concepts to flying robot swarms. He's also worked on a variety of research from software architecture, evolutionary complex systems, and intelligent swarm robotics.