CHCI participation in IEEE VR 2024

April 8, 2024

Multiple CHCI faculty and students are participating in IEEE VR this spring with papers, workshops, contest entries, and doctoral consortium papers. CHCI faculty and students are listed in bold.

IEEE VR 2024

The IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR) is the premier international event for presenting research results in virtual, augmented, and mixed reality (VR/AR/MR). Since 1993, IEEE VR has presented groundbreaking research and accomplishments by virtual reality pioneers: scientists, engineers, designers, and artists, paving the way for the future. Soon, IEEE VR expanded its scope also to include augmented, mixed, and other forms of mediated reality. Similarly, the IEEE Symposium on 3D User Interfaces (3DUI), which started as a workshop at IEEE VR in 2004, has become the premier venue for 3D user interfaces and 3D interaction in virtual environments.

IEEE VR Conference Leadership:

- Alexander Giovannelli, Communications Co-Chair

- Cassidy Nelson and Lee Lisle (VT CS/CHCI alum), among others, XR Future Faculty Forum Chairs

Papers

Alexander Krasner and Joseph Gabbard

MusiKeys: Exploring Haptic-to-Auditory Sensory Substitution to Improve Mid-Air Text-Entry

Abstract - We investigated using auditory feedback in virtual reality mid-air typing to communicate the missing haptic feedback information typists typically receive when using a physical keyboard. We conducted a study with 24 participants, encompassing four mid-air virtual keyboards with increasing amounts of feedback information and a fifth physical keyboard as a reference. Results suggest clicking feedback on key-press and key-release improves performance compared to no auditory feedback, which is consistent with the literature. We find that audio can substitute information contained in haptic feedback in that users can accurately perceive presented information. However, this understanding did not translate to significant differences in performance.

![The image is a diagram consisting of three panels labeled [A], [B], and [C], detailing an auditory augmentation design for a musical keyboard interface called MusiKeys. Panel [A] shows a side view of a hand pressing a key with different distances labeled for start, press, and release positions, alongside a low-pass filter cutoff frequency graphic. Panel [B] shows two modulation curves for sound volume as a function of key press distance, with annotations about the relationship between the distance pressed and the percentage change in volume. Panel [C] illustrates the mapping of fingers to piano keys (D3, F3, A4, C4, E4, G4, B5) with corresponding left and right hand fingers, including the thumbs. Notes and explanations are provided on the side, mentioning that the y-values stay constant in the release mode and that the curves are approximate.](/content/hci_icat_vt_edu/en/research/chci-participation-in-ieee-vr-2024/_jcr_content/content/adaptiveimage_1788081170.transform/m-medium/image.jpg)

Christos Lougiakis, Jorge Juan González, Giorgos Ganias, Akrivi Katifori, Ioannis-Panagiotis Ioannidis, Maria Roussou

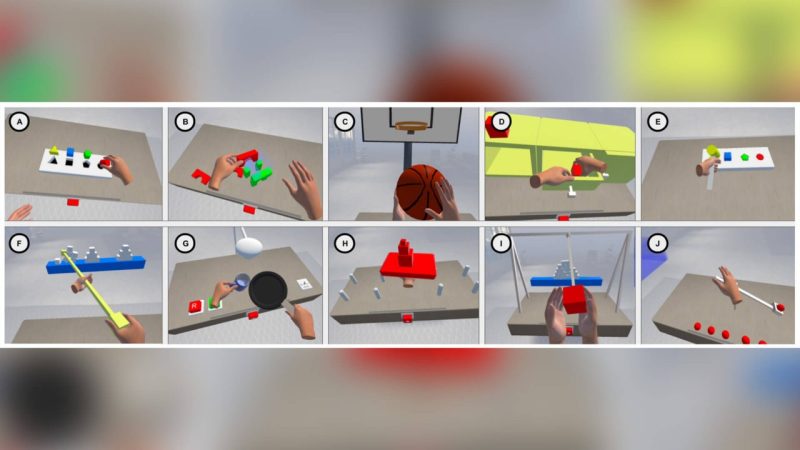

Comparing Physics-based Hand Interaction in Virtual Reality: Custom Soft Body Simulation vs. Off-the-Shelf Integrated Solution

Abstract: Physics-based hand interaction in VR has been extensively explored, but almost none of the solutions are usable. The only exception is CLAP, a custom soft-body simulation offering realistic and smooth hand interaction in VR. Even CLAP, however, imposes constraints on virtual hand and object behavior. We introduce HPTK+, a software solution that utilizes the physics engine NVIDIA PhysX. Benefiting from the engine's maturity and integration with game engines, we aim to enable more general and free-hand interactions in virtual environments. We conducted a user study with 27 participants, comparing the interactions supported by both libraries. Results indicate an overall preference for CLAP but no significant differences in other measures or performance, except variance. These findings provide insights into the libraries' suitability for specific tasks. Additionally, we highlight HPTK+'s exclusive support for diverse interactions, positioning it as an ideal candidate for further research in physics-based VR interactions.

Workshop Organizers and Papers

Isaac Cho, Kangsoo Kim, Dongyun Han, Allison Bayro, Heejin Jeong, Hyungil Kim, Hayoun Moon, and Myounghoon (Philart) Jeon are the organizers of the 3rd International Workshop on eXtended Reality for Industrial and Occupational Supports (XRIOS).

This workshop — eXtended Reality for Industrial and Occupational Supports (XRIOS) — aims to identify the current state of XR research and gaps in the scope of human factors and ergonomics, mainly related to industrial and occupational tasks. Further, the workshop aims to discuss potential future research directions and to build a community that bridges XR developers, human factors, and ergonomics researchers interested in industrial and occupational applications.

Leonardo Pavanatto and Doug Bowman helped organize the xrWORKS workshop. The 1st Workshop on Extended Reality for Knowledge Work (xrWORKS) aims to create a space where both academic and industry researchers can discuss their experiences and visions to continue growing the impact of XR in the future of work. Through a combination of position papers, in-person demonstrations, and a brainstorming session, we aim to fully understand the space and debate the future research agenda. "KnowledgeWork" follows the definition initially coined by Drucker (1966), which states that information workers apply theoretical and analytical knowledge to develop products and services. Much of the work might be detached from physical documents, artifacts, or specific work locations and is mediated through digital devices such as laptops. Workers include architects, engineers, scientists, design thinkers, public accountants, lawyers, and academics, whose job is to "think for a living."

Leonardo Pavanatto and Doug Bowman will present their paper "Virtual Displays for Knowledge Work: Extending or Replacing Physical Monitors for More Screen Space" at the xrWORKS workshop.

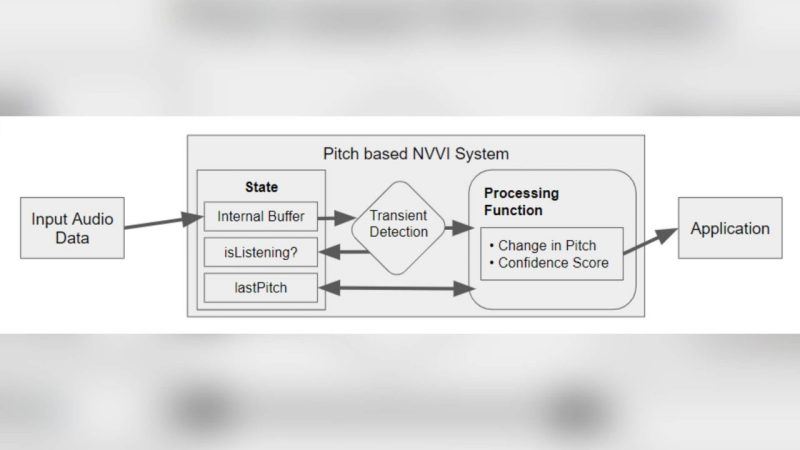

Samuel Williams and Denis Gracanin.

An Approach to Pitch-Based Implementation of Non-verbal Vocal Interaction (NVVI) will be presented at the Workshop on Novel Input Devices and Interaction Techniques (NIDIT). Proceedings of the 2024 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW)

Abstract: Non-verbal vocal interaction (NVVI) allows people to use non-speech as an interaction technique. Many forms of NVVI exist, including humming, whistling, and tongue-clicking, but the quantity of existing research is limited and high in variety. This work attempts to bridge the field and provides a large-scale study exploring the usability of a pitch-based NVVI system using a relative pitch approach. The study tasked users with controlling an HTML slider using the NVVI technique by humming and whistling. Findings show that users perform better with humming than whistling. Notable phenomena occurring during interactions, including vocal scrolling and overshooting, are explored.

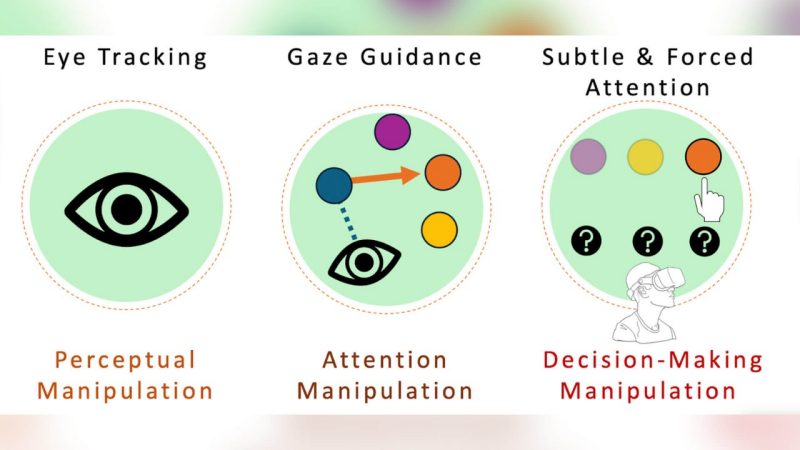

Brendan David John and Nikki Ramirez.

"Deceptive Patterns and Perceptual Risks in an Eye-Tracked Virtual Reality" will be presented at the IDEATxR Workshop and will be published in the Proceedings of the 2024 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW).

Multiple VT CHCI faculty and students or alums helped organize this IDEATExR Workshop. IDEATExR is a workshop focused on inclusion, diversity, equity, accessibility, transparency, and ethics in XR. Organizers include Lee Lisle (VT CS/CHCI alum), Cassidy R. Nelson (ISE Ph.D. student), Nayara de Oliveira Faria (ISE Ph.D. student), Rafael N.C. Patrick (ISE faculty), and Missie Smith (VT ISE alum), among others. This is the first year that IEEE VR is hosting a rendition of the IDEATExR Workshop, which originated at the International Symposium on Augmented and Mixed Reality (ISMAR) conference.

Workshop abstract: IEEE VR and ISMAR are premier venues for mixed reality (XR) research that converges hundreds of researchers across disciplines and research spectrums that include both technical and human aspects of XR. However, at IEEE VR, only 15% of the first paper authors are women, and further, approximately 95% of the global population is excluded from VR research, resulting in poor generalizability. In addition, ethics informing XR research has been identified as one of the grand challenges facing human-computer interaction research today, with the replication crisis featuring transparency as a critical step for remediation. These factors make formal discussions surrounding inclusion, diversity, equity, accessibility, transparency, and ethics in XR timely and necessary. Participants in this workshop will have the opportunity to provide their insights on what is working for our community and what isn’t – effectively helping to shape the future of IEEE VR and ISMAR.

Contest Entries/Demos

3DUI Contest:

Logan Lane, Alexander Giovannelli, Ibrahim Asadullah Tahmid, Francielly Rodrigues, Cory Ilo, Darren Hsu, Shakiba Davari, Christos Lougiakis, Doug Bowman

The Alchemist: A Gesture-Based 3D User Interface for Engaging Arithmetic Calculations

Abstract: This paper presents our solution to the IEEE VR 2024 3DUI contest. We present The Alchemist, a VR experience tailored to aid children in practicing and mastering the four fundamental mathematical operators. In The Alchemist, players embark on a fantastical journey where they must prepare three potions to break a malevolent curse imprisoning the Gobbler kingdom. Our contributions include the development of a novel number input interface, Pinwheel, an extension of PizzaText [7], and four novel gestures, each corresponding to a distinct mathematical operator, designed to assist children in retaining practice with these operations. Preliminary tests indicate that Pinwheel and the four associated gestures facilitate the quick and efficient execution of mathematical operations.

![A screenshot of a video game titled "The Alchemist" featuring a main menu screen. The game's setting appears to be an old-fashioned alchemist's laboratory with wooden floors and walls, various hanging pots and lanterns, and a large, central workbench with potion bottles. There are three menu options overlaid on the scene: "Start Game," "Start Game [Tutorial]," and "High Scores."](/content/hci_icat_vt_edu/en/research/chci-participation-in-ieee-vr-2024/_jcr_content/content/adaptiveimage_346392863.transform/m-medium/image.jpg)

Posters

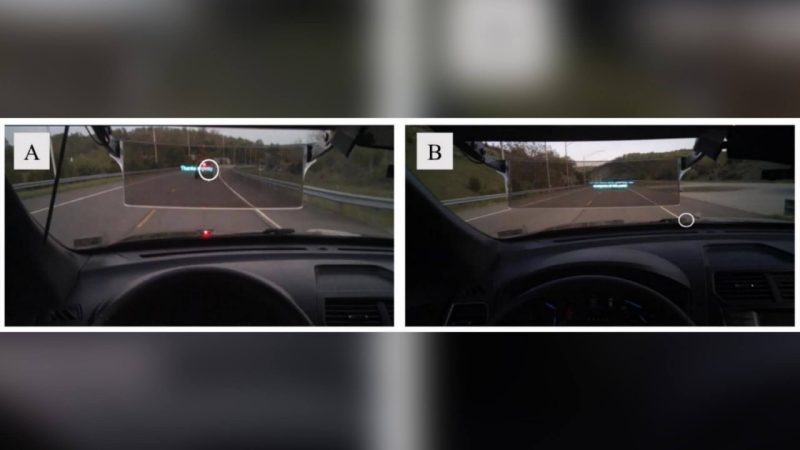

Nayara Faria; Brian Williams; Joseph Gabbard

I look, but I don't see it: Inattentional Blindness as an Evaluation Metric for Augmented-Reality.

Abstract: As vehicles increasingly incorporate augmented reality (AR) into head-up displays, assessing their safety in driving becomes vital. AR, blending real and synthetic scenes, can cause inattentional blindness (IB), where crucial information is missed despite users directly looking at them. Traditional HCI methods, centered on task time and accuracy, fail to evaluate AR's impact on human performance in safety-critical contexts. Our real-road user study with AR-enabled vehicles focuses on inattentional blindness as a critical metric for assessment. The results underline the importance of including IB in AR evaluations, extending to other safety-critical sectors like manufacturing and healthcare.

Doctoral Consortium

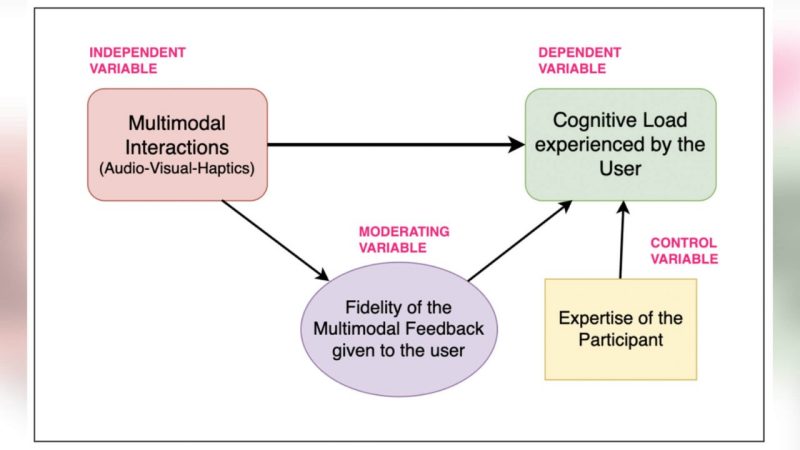

Nikitha Chandrashekar. Understanding the Impact of the Fidelity of Multimodal Interactions in XR-based Training Simulators on Cognitive Load. Proceedings of the 2024 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW).

Abstract: As eXtended Reality (XR) technologies evolve, integrating multimodal interfaces becomes a defining factor in immersive experiences. Existing literature highlights a lack of consensus regarding the impact of these interfaces in XR environments. Due to the Uncanny Valley Effect and its amplification in multimodal stimuli, it poses a potential challenge of increased cognitive load due to dissonance between user expectations and reality. My research pivots on the observation that current studies often overlook a crucial factor—the fidelity of stimuli presented to users. The main goal of my research is to answer the question of how multimodal interactions in XR-based applications impact the cognitive load experienced by the user. To address this gap, I employ a comprehensive human-computer interaction (HCI) research approach involving frameworks, theories, user studies, and guidelines. The goal is to systematically investigate the interplay of stimulus fidelity and cognitive load in XR, aiming to offer insights for the design of Audio-Visual-Haptic (AVH) interfaces.

Elham Mohammedrezaei. Reinforcement Learning for Context-aware and Adaptive Lighting Design using Extended Reality: Impacts on Human Emotions and Behaviors. Proceedings of the 2024 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW).

Abstract: In the interconnected world of smart built environments, Extended Reality (XR) emerges as a transformative technology that can enhance user experiences through personalized lighting systems. Integrating XR with deep reinforcement learning for adaptive lighting design (ALD) can optimize visual experiences while addressing real-time data analysis, XR system complexities, and integration challenges with building automation systems. The research centers on developing an XR-based ALD system that dynamically responds to user preferences, positively impacting human emotions, behaviors, and overall well-being.

Sunday Ubur. Enhancing Accessibility and Emotional Expression in Educational Extended Reality for Deaf and Hard of Hearing: A User-centric Investigation. Proceedings of the 2024 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW).

Abstract: My research addresses accessibility challenges for the Deaf and Hard of Hearing (DHH) population, specifically within Extended Reality (XR) applications for education. The study aims to enhance accessibility and emotional expression in XR environments, emphasizing improved communication. The objectives include identifying best practices and guidelines for designing accessible XR, exploring prescriptive and descriptive aspects of accessibility design, and integrating emotional aspects for DHH users. The three-phase research plan includes a literature review, the development of an accessible XR system, and user studies. Anticipated contributions involve enhanced support for DHH individuals in XR and a deeper understanding of human-computer interaction aspects.