CHCI Members Showcase Research at the 2022 IEEE VR Conference

The IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR) is the premier international event for the presentation of research results in the broad areas of virtual, augmented, and mixed reality (VR/AR/XR). CHCI faculty and student members presented research at the event: held virtually from March 12-16, 2022.

CHCI Faculty and Students are indicated in bold.

VT CS/CHCI faculty and students were honored with multiple awards

Doug Bowman (Computer Science) was honored as a member of the inaugural class of the IEEE VGTC Virtual Reality Academy for “significantly advancing knowledge in 3D UIs and VR, profoundly influencing how these systems are characterized and designed."

The VT CS 3DI Group’s team (supervised by Doug Bowman) in the 3DUI Contest took first place! This is their 2nd win in a row, and their 6th overall since the contest began in 2010. Team leader was CS PhD student Lee Lisle. Other team members included: Feiyu Lu (CS PhD student), Shakiba Davari (CS PhD student), Ibrahim Tahmid (CS PhD student), Alexander Giovannelli (CS MS student), Cory Ilo (CS PhD student), Leonardo Pavanatto (CS PhD student), Lei Zhang (former iPhD student), Luke Schlueter (CS undergraduate student), and Doug Bowman. The team presented their work on Clean the Ocean: An Immersive VR Experience Proposing New Modifications to Go-Go and WiM Techniques.

Papers

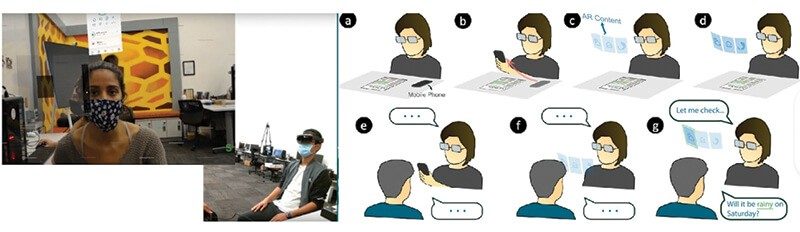

Shakiba Davari (PhD student, Computer Science), Feiyu Lu (PhD student, Computer Science, Doug Bowman (Computer Science)

Glanceable Augmented Reality interfaces have the potential to provide fast and efficient information access for the user. However, the virtual content's placement and accessibility depends on the user context. We designed a Context-Aware AR interface that can intelligently adapt for two different contexts: solo and social. We evaluated information access using Context-Aware AR compared to current mobile phones and non-adaptive Glanceable AR interfaces. Our results indicate the advantages of Context-Aware AR interface for information access efficiency, avoiding negative effects on primary tasks or social interactions, and overall user experience.

Richard Skarbez, Joe Gabbard (Industrial and Systems Engineering), Doug Bowman, Todd Ogle (University Libraries), Thomas Tucker (School of Visual Arts)

Virtual replicas of real places: Experimental investigations

As virtual reality (VR) technology becomes cheaper, higher-quality, and more widely available, it is seeing increasing use in a variety of applications including cultural heritage, real estate, and architecture. A common goal for all these applications is a compelling virtual recreation of a real place. Despite this, there has been very little research into how users perceive and experience such replicated spaces. This paper reports the results from a series of three user studies investigating this topic. Results include that the scale of the room and large objects in it are most important for users to perceive the room as real and that non-physical behaviors such as objects floating in air are readily noticeable and have a negative effect even when the errors are small in scale.

Mohammed Safayet Arefin, Nate Phillips, Alexander Plopski, Joseph L Gabbard, J. Edward Swan

In optical see-through augmented reality (AR), information is often distributed between real and virtual contexts, and often appears at different distances from the user. To integrate information, users must repeatedly switch context and change focal distance. Previously, Gabbard, Mehra, and Swan (2018) examined these issues, using a text-based visual search task on a monocular, optical see-through AR display. Our study partially replicated and extended this task on a custom-built AR Haploscope for both monocular and binocular viewings. Results establishes that context switching, focal distance switching, and transient focal blur remain important AR user interface design issues.

Workshops

8th Workshop on Perceptual and Cognitive Issues in XR (PERCxR)

Keynote Speaker: Joe Gabbard

Co-Organizer: Nayara de Oliveira Faria (PhD student, Industrial Systems Engineering)

Workshop Description: The crux of this workshop is the creation of a better understanding of the various perceptual and cognitive issues that inform and constrain the design of effective extended reality systems. There is neither an in-depth overview of these factors, nor well-founded knowledge on most effects as gained through formal validation. In particular, long-term usage effects are inadequately understood. Meanwhile, mobile platforms and emerging display hardware ("glasses") promise to ignite the number of users, as well as the system usage duration. To fulfill usability needs, a thorough understanding of perceptual and intertwined cognitive factors is highly needed by both research and industry: issues such as depth misinterpretation, object relationship mismatches and information overload can severely limit usability of applications, or even pose risks in their usage. Based on the gained knowledge, for example, new interactive visualization and view management techniques can be iteratively defined, developed and validated, optimized to be congruent with human capabilities and limitations in route to more usable application interfaces.

1st International Workshop on eXtended Reality for Industrial and Occupational Supports (XRIOS)

Co-Organizer: Myounghoon (Philart) Jeon (Industrial and Systems Engineering)

Alan Smith, Charlie Duff (CHCI Student Council Member, Industrial and Systems Engineering), Rodrigo Sarlo, Joseph L. Gabbard

Paper: Wearable Augmented Reality Interface Design for Bridge Inspection

Doug Bowman, Joe Gabbard, Nazila Roofigari-Esfahan (Building Construction), Keerthana Adapa, Daniel Auerbach, Kathryn Britt, Cory Ilo (PhD student Computer Science)

Paper: BuildAR: A Proof-of-Concept Prototype of Intelligent Augmented Reality in Construction.

Nazila Roofigari-Esfahan, Curt Porterfield, Todd Ogle, Tanner Upthegrove (Institute for Creativity, Arts, and Technology), Myounghoon Jeon, Sang Won Lee (Computer Science)

Paper: Group-based VR Training to Improve Hazard Recognition, Evaluation, and Control for Highway Construction Workers

Workshop Description: This workshop-eXtended Reality for Industrial and Occupational Supports (XRIOS)-aims to identify the current state of XR research and the gaps in the scope of human factors and ergonomics, mainly related to the industrial and occupational tasks, and discuss potential future research directions. XRIOS will build a community that bridges XR developers and human factors and ergonomics researchers interested in industrial and occupational applications.

Tutorials

Denis Gračanin (Computer Science)

Empathy-enabled Extended Reality

Empathy is defined as an ability to understand and share others' feelings, a critical part of meaningful social interactions. There are different types of empathy, such as cognitive, emotional and compassionate empathy. A major claim about Extended Reality (XR) is that it can foster empathy and elicit empathetic responses through digital simulations. The availability of portable and affordable bio-sensors (especially contactless) makes it possible to measure, in real-time, physiological and other signals while using XR. These measurements (such as heart rate, breathing rate, facial expressions, electro-dermal activity, EEG, and others) can inform, in real-time, about a user's cognitive and emotional state and enable empathetic responses and measurements. This information can be used both to evaluate the impact of XR content on the user and to adapt XR content based on the user's state. The goal of this tutorial is to introduce the participants to the concept of empathy and its use in XR applications. The participants will learn how to incorporate contextualized empathy data, information, and services into XR applications and use that to improve user experience. The use of empathy will be presented from two points of view. First, we will view XR as an 'empathy machine' that elicits empathetic responses in users. Second, we will view XR as an 'empathetic entity' that empathizes with users and adjust XR user experience accordingly. Empathy-enabled XR combines these two views and provides a two-way empathy link between users and XR. Several example applications from healthcare and education domains will be presented.

Doctoral Consortium

Doug Bowman and Joe Gabbard served as mentors to Ph.D. students in the conference’s doctoral consortium.

Shakiba Davari (PhD student, Computer Science) presented her PhD research at the Doctoral Consortium:

[DC] Context-Aware Inference and Adaptation in Augmented Reality

The recent developments in Augmented Reality (AR) eyeglasses promise the potential for more efficient and reliable information access. This reinforces the widespread belief that AR Glasses are the next generation of personal computing devices, providing efficient information access to the user all day, every day. However, to realize this vision of all-day wearable AR, the AR interface must address the challenges that constant and pervasive presence of virtual content may cause. Throughout the day, as the user's context switches, an optimal all-day interface must adapt its virtual content display and interactions. The optimal interface, that is the most efficient yet least intrusive, in one context may be the worst interface for another context. This work aims to propose a research agenda to design and validate different adaptation techniques and context-aware AR interfaces and introduce a framework for the design of such intelligent interfaces.