CHCI Contributions at VL/HCC 2023

September 25, 2023

CHCI faculty and students have significant contributions at the IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC), October 2-6, 2023 in Washington DC, USA. VL/HCC is the premier international forum for research on this topic. Established in 1984, the mission of the conference is to support the design, theory, application, and evaluation of computing technologies and languages for programming, modeling, and communicating, which are easier to learn, use, and understand by people.

Along with many students, several CHCI faculty have contributions, including Yan Chen, Sang Won Lee, Chris Brown, and Abiola Akanmu, with co-authors on research papers noted below; two are also serving as session chairs. CHCI members are listed in bold, and presenters are underlined.

Session Chairs:

Yan Chen, Session Chair

Session Title: End-User Programming: Research Papers

Chris Brown, Session Chair

Session Title: Software Demonstrations: Posters and Showpieces

Research Papers:

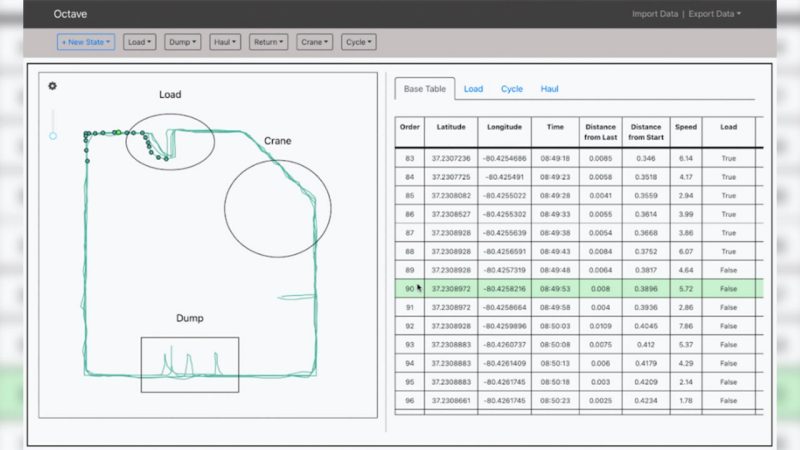

Authors: Daniel Manesh, Andy Luu, Mohammad Khalid, Chinedu Okonkwo, Abiola Akanmu, Ibukun Awolusi, Homero Murzi and Sang Won Lee

Title: Octave: an End-user Programming Environment for Analysis of Spatiotemporal Data for Construction Students

The construction industry is a new avenue for big data and data science with sensors and cyber-physical systems deployed in the field. Construction students need to develop computational thinking skills to help make sense of this data, but existing data science environments designed with textual programming languages create a significant barrier to entry. To bridge this gap, we introduce Octave, an end-user programming environment designed to help non-expert programmers analyze spatiotemporal data (e.g., as gathered by a GPS sensor) in an interactive graphical user interface. To aid exploration and understanding, Octave's design incorporates a high degree of liveness, highlighting the interconnection between data, computation, and visualization. We share the underlying design principles behind Octave and details about the system design and implementation. To evaluate Octave, we conducted a usability study with students studying construction. The results show that non-programmer construction students were able to learn Octave easily and were able to effectively use it to solve domain-specific problems from construction education. The participants appreciated Octave's liveness and felt they could easily connect it to real-life problems in their field. Our work informs the design of future accessible end-user programming environments for data analysis targeting non-experts.

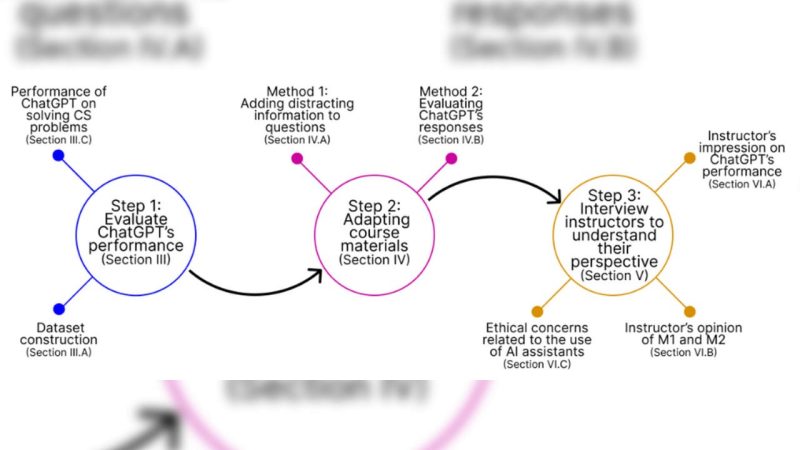

Authors: Tianjia Wang, Daniel Vargas Díaz, Chris Brown, Yan Chen

Title: Exploring the Role of AI Assistants in Computer Science Education: Methods, Implications, and Instructor Perspectives

The use of AI assistants, along with the challenges they present, has sparked significant debate within the community of computer science education. While these tools demonstrate the potential to support students' learning and instructors' teaching, they also raise concerns about enabling unethical uses by students. Previous research has suggested various strategies aimed at addressing these issues. However, they concentrate on introductory programming courses and focus on one specific type of problem.

The present research evaluated the performance of ChatGPT, a state-of-the-art AI assistant, at solving 187 problems spanning three distinct types that were collected from six undergraduate computer science. The selected courses covered different topics and targeted different program levels. We then explored methods to modify these problems to adapt them to ChatGPT's capabilities to reduce potential misuse by students. Finally, we conducted semi-structured interviews with 11 computer science instructors. The aim was to gather their opinions on our problem modification methods, understand their perspectives on the impact of AI assistants on computer science education, and learn their strategies for adapting their courses to leverage these AI capabilities for educational improvement. The results revealed issues ranging from academic fairness to long-term impact on students' mental models. From our results, we derived design implications and recommended tools to help instructors design and create future course material that could more effectively adapt to AI assistants' capabilities.

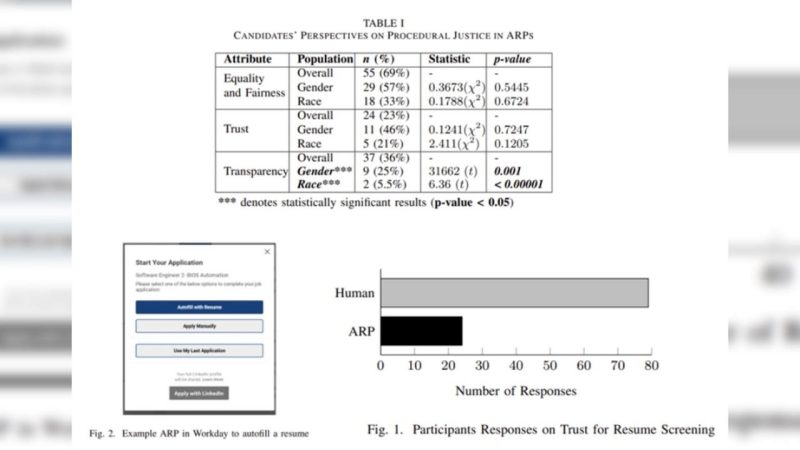

Authors: Swanand Vaishampayan, Sahar Farzanehpour, Chris Brown

Title: Procedural Justice and Fairness in Automated Resume Parsers for Tech Hiring: Insights from Candidate Perspectives

To streamline tech hiring processes, the use of talent management platforms has emerged as a new norm. AI-driven Automated Resume Parsers (ARPs) are aimed to simplify the application process for candidates and employers. However, ARP designs typically prioritize employers over candidates. Further, prior work also demonstrates these AI systems are not able to achieve the intended goals of inclusivity and fairness for candidates, negatively impacting minorities in the tech hiring pipeline. Thus, aspiring IT professionals on the job market often spend significant time and effort preparing applications, only to have their resume rejected by an AI model without receiving attention from a recruiter. This work aims to study candidates’ perspectives of ARPs. We sent a survey, receiving responses from 103 undergraduate and graduate CS students, and analyzed their perspectives through a prism of procedural justice, a measure of fairness. By introducing procedural justice and opting for a human-centered design approach, we believe AI models in the hiring pipeline can achieve the intended goals of inclusivity and fairness. The findings from this study will be beneficial for future designs of more transparent and fair ARPs.

Authors: Minhyuk Ko, Dibyendu Brinto Bose, Hemayet Ahmed Chowdhury, Mohammed Seyam, Chris Brown

Title: Exploring the Barriers and Factors that Influence Debugger Usage for Students

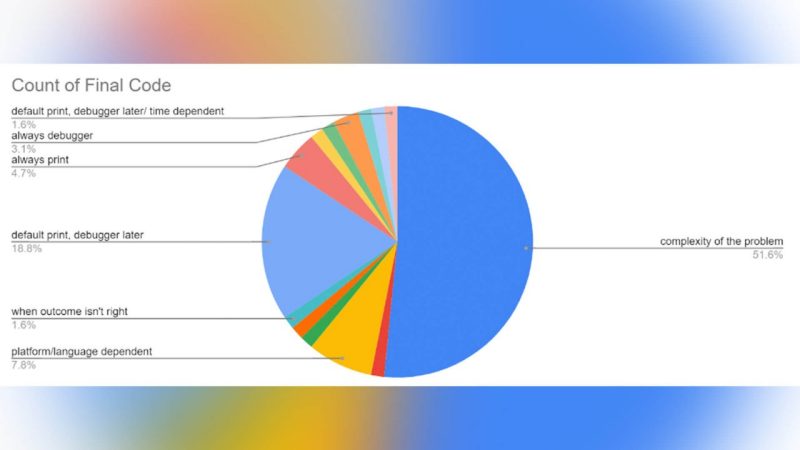

Debugging is one of the most expensive and time-consuming processes in software development. To support programmers, researchers, and developers have introduced a wide variety of debuggers or tools to automatically find errors in code, to make this process more efficient. However, there is a gap between industry developers and students regarding the skillful use of debuggers. We aim to understand this gap by studying barriers that hinder new programmers from using debuggers. We conducted a survey involving 73 students with various extents of programming experience and performed qualitative analysis. The goal was to extract insights into why students do not develop debugger usage skills. Our results suggest the general lack of academic course focus on debuggers is one of the primary reasons for avoidance. At the same time, complex user interfaces and a lack of visualization also seem intimidating for many students, making using a debugger unappealing. Based on the results, we provide guidelines to motivate future debugger designs and education materials to improve debugger usage. Our survey results summary is publicly available at https://github.com/minhyukko/vlhcc23

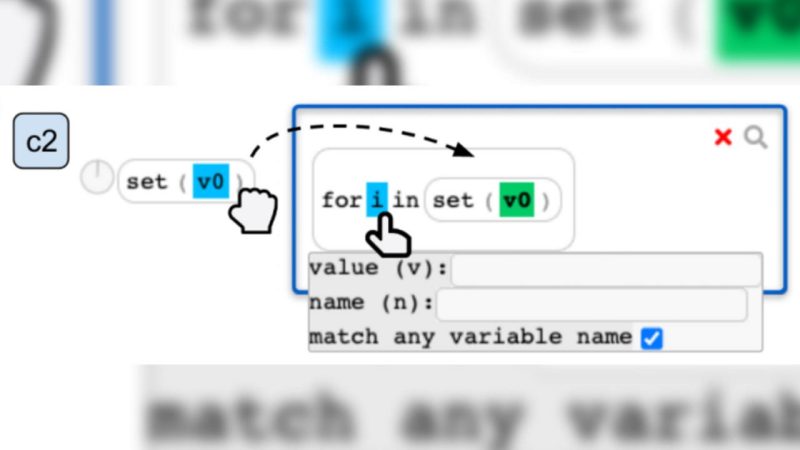

Authors: Ge Zhang, Yan Chen, Steve Oney

Title: RunEx: Augmenting Regular-Expression Code Search with Runtime Values

Programming instructors frequently use in-class exercises to help students reinforce concepts learned in lecture. However, identifying class-wide patterns and mistakes in students’ code can be challenging, especially for large classes. Conventional code search tools are insufficient for this purpose as they are not designed for finding semantic structures underlying large students’ code corpus, where the code samples are similar, relatively small, and written by novice programmers. To address this limitation, we introduce RunEx, a novel code search tool where instructors can effortlessly generate queries with minimal prior knowledge of code search and rapidly search through a large code corpus. The tool consists of two parts: 1) a syntax that augments regular expressions with runtime values, and 2) a user interface that enables instructors to construct runtime and syntax-based queries with high expressiveness and apply combined filters to code examples.

Our comparison experiment shows that RunEx outperforms baseline systems with text matching alone in identifying code patterns with higher accuracy. Furthermore, RunEx features a user interface that requires minimal prior knowledge to create search queries. Through searching and analyzing students’ code with runtime values at scale, our work introduces a new paradigm for understanding patterns and errors in programming education.