CHCI Contributions at ISMAR 2022

October 11, 2022

CHCI faculty and students are making significant contributions to ISMAR 2022, presenting papers, organizing workshops, and serving on conference committees in leadership roles. Joe Gabbard is a co-chair of the Journal Paper Science & Technology Programme Committee. Doug Bowman is the Liaison with the IEEE journal Transactions on Visual and Computer Graphics (IEEE TVCG). Nayara Faria is a co-chair of the Inclusion, Diversity, Equity & Accessibility Committee. Other CHCI members are in bold below.

The IEEE International Symposium on Mixed and Augmented Reality (IEEE ISMAR) is the premier conference for Augmented Reality (AR), Mixed Reality (MR) and Virtual Reality (VR) attracting the world’s leading researchers from both academia and industry. ISMAR explores the advances in commercial and research activities related to AR, MR, and VR by continuing the expansion of its scope over the past several years. The 21st IEEE International Symposium on Mixed and Augmented Reality (ISMAR) is in Singapore, October 17-21, 2022.

Journal Paper S&T Programme Chair

IEEE TVCG Liaison

Inclusion, Diversity, Equity & Accessibility Chair

Research Papers

Cassidy R. Nelson, Joseph L. Gabbard, Jason Moats, & Ranjana K. Mehta. User-Centered Design and Evaluation of ARTTS: An Augmented Reality Triage Tool Suite for Mass Casualty Incidents.

ARTTS is a head-worn Augmented Reality (AR) Triage Tool Suite containing an initial sorting tool, virtual assessment tool, and virtual triage tag to assist first responders in mass casualty incidents. The initial sort-ing tool prompts stunned responders through first-wave tasks to aid recalibration from shock to triage. The virtual assessment tool provides novice responders, potentially confused by the chaos, with a walkthrough of the SALT triage flowchart. Finally, current emergency medical triage processes leverage static paper tags susceptible to loss or illegible damage. ARTTS’ virtual triage tags are dynamic, can be updated automatically with vital sensors or manually through responder interaction, and employ user interface emergent features based on individual patient conditions. This paper describes the UX process used to develop ARTTS.

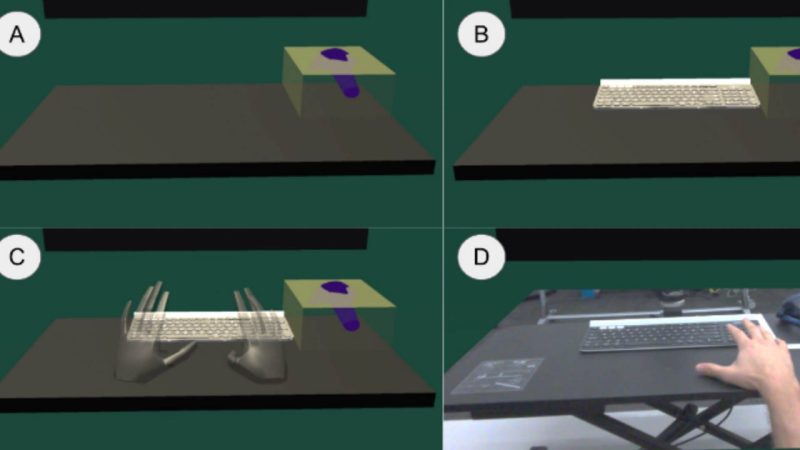

Alexander Giovannelli, Lee Lisle, and Doug Bowman. Exploring the Impact of Visual Information on Intermittent Typing in Virtual Reality.

For touch typists, using a physical keyboard ensures optimal text entry task performance in immersive virtual environments. However, successful typing depends on the user’s ability to accurately position their hands on the keyboard after performing other, non-keyboard tasks. Finding the correct hand position depends on sensory feedback, including visual information. We designed and conducted a user study where we investigated the impact of visual representations of the keyboard and users’ hands on the time required to place hands on the homing bars of a keyboard after performing other tasks. We found that this keyboard homing time decreased as the fidelity of visual representations of the keyboard and hands increased, with a video pass-through condition providing the best performance. We discuss additional impacts of visual representations of a user’s hands and the keyboard on typing performance and user experience in virtual reality.

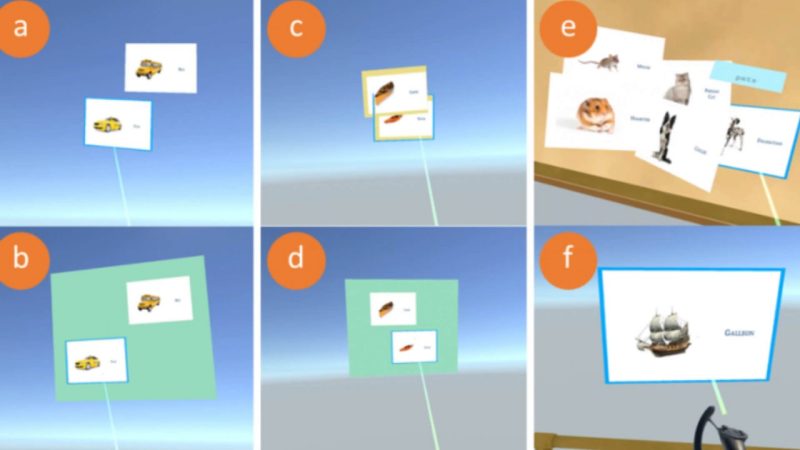

Ibrahim Tahmid, Lee Lisle, Kylie Davidson, Chris North, and Doug Bowman. Evaluating the Benefits of Explicit and Semi-Automated Clusters for Immersive Sensemaking.

Semi-automated Clustering

Immersive spaces have great potential to support analysts in complex sensemaking tasks, but the use of only manual interactions for organizing data elements can become tedious. We analyzed the user interactions to support cluster formation in an immersive sensemaking system, and designed a semi-automated cluster creation technique that determines the user’s intent to create a cluster based on object proximity. We present the results of a user study comparing this proximity-based technique with a manual clustering technique and a baseline immersive workspace with no explicit clustering support. We found that semi-automated clustering was faster and preferred, while manual clustering gave greater control to users. These results provide support for the approach of adding intelligent semantic interactions to aid the users of immersive analytics systems.

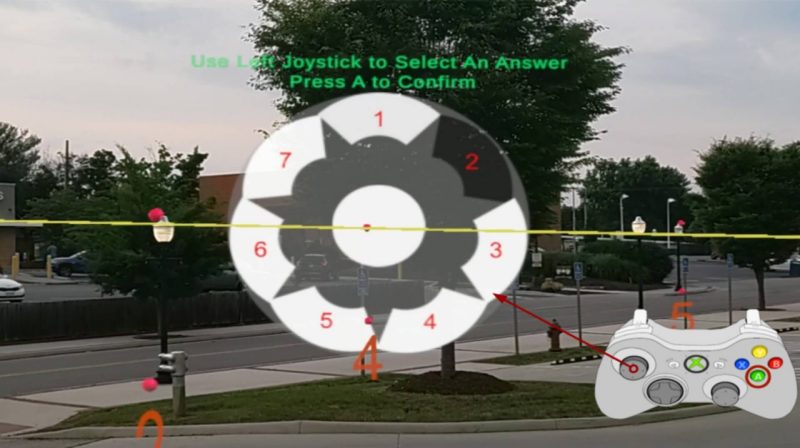

Yuan Li, Ibrahim Tahmid, Feiyu Lu, and Doug Bowman. Evaluation of Pointing Ray Techniques for Distant Object Referencing in Model-Free Outdoor Collaborative Augmented Reality.

Referencing objects of interest is a common requirement in many collaborative tasks. Nonetheless, accurate object referencing at a distance can be challenging due to the reduced visibility of the objects or the collaborator and limited communication medium. Augmented Reality (AR) may help address the issues by providing virtual pointing rays to the target of common interest. However, such pointing ray techniques can face critical limitations in large outdoor spaces, especially when the environment model is unavailable. In this work, we evaluated two pointing ray techniques for distant object referencing in model-free AR from the literature: the Double Ray technique enhancing visual matching between rays and targets, and the Parallel Bars technique providing artificial orientation cues. Our experiment in outdoor AR involving participants as pointers and observers partially replicated results from a previous study that only evaluated observers in simulated AR. We found that while the effectiveness of the Double Ray technique is reduced with the additional workload for the pointer and human pointing errors, it is still beneficial for distant object referencing.

Jerald Thomas, S. Yong, and E.S. Rosenberg. Inverse Kinematics Assistance for the Creation of Redirected Walking Paths.

Virtual reality interactions that require a specific relationship between the virtual and physical coordinate systems, such as passive haptic interactions, are not possible with locomotion techniques using redirected walking. To address this limitation, recent research has introduced environmental alignment, which is the notion of using redirected walking techniques to align the virtual and physical coordinate systems such that these interactions are possible. However, the previous research has only implemented environmental alignment in a reactive manner, and the authors posited that better results could be achieved if instead a predictive algorithm is used. In this work, we introduce a novel way to model the environmental alignment problem as a version of the inverse kinematics problem which can be incorporated into several existing predictive algorithms, as well as a simple proof-of-concept implementation. An exploratory human subject study (N=17) was conducted to evaluate this implementation's usability as a tool for authoring planned path redirected walking scenarios that incorporate physical interactivity. To our knowledge, this is the first study to evaluate redirected walking experience design tools and provides a possible framework for future studies. Our qualitative analysis of the results generated both guidance for integrating automatic solvers and broad recommendations for designing redirected path authoring tools.

C. You, B. Benda, E.S. Rosenberg, E. Ragan (VT/CHCI alum), B. Lok, and Jerald Thomas. Strafing Gain: Redirecting Users One Diagonal Step At A Time.

Redirected walking can effectively utilize a user's physical space when traversing larger virtual environments by using virtual self-motion gains for a user's physical motions. In particular, curvature gain presents unique advantages in redirection but can lead to sub-optimal orientations. To prevent this and add additional utility in redirected walking, we formally present strafing gain. Strafing gain seeks to add incremental lateral movements to a user's position causing the user to walk along a diagonal trajectory while maintaining the original orientation of the user. In a study with 27 participants, we tested 11 values to determine the detection thresholds of strafing gain. The study, which was modeled on prior detection threshold studies, found that strafing gain could successfully redirect participants to walk along a 5.57 degree diagonal to the right and a 4.68 degree diagonal to the left. Furthermore, a supplementary study with 10 participants was conducted, verifying that orientation was maintained throughout redirection and validating the obtained detection thresholds. We discuss the implications of these findings and potential ways of improving these quantities in real-world applications.

Workshops

Skarbez, R. (formerly VT/ISE), Smith, M. (VT/ISE alum), Faria, N., & Peillard, E. (2022). Perceptual and Cognitive Issues in xR.

The crux of this workshop is the creation of a better understanding of the various perceptual and cognitive issues that inform and constrain the design of effective extended reality systems. In particular, long-term usage effects are inadequately understood. Meanwhile, mobile platforms and emerging display hardware (“glasses”) promise to ignite the number of users, as well as the system usage duration. To fulfill usability needs, a thorough understanding of perceptual and intertwined cognitive factors is highly needed by both research and industry: issues such as depth misinterpretation, object relationship mismatches and information overload can severely limit usability of applications, or even pose risks in their usage. Based on the gained knowledge, for example, new interactive visualization and view management techniques can be iteratively defined, developed and validated, optimized to be congruent with human capabilities and limitations in route to more usable application interfaces.

Organizers: Nelson, R. C., Faria, N., Kopper, R.(VT/CHCI alum), Patrick, R. (2022). Inclusion, Diversity, Equity, Accessibility, Transparency, and Ethics in XR (IDEATExR).

This workshop has six facets: inclusion, diversity, equity, accessibility, transparency, and ethics. We expect researchers to submit early work that commits to one or more of these facets. These could include work with diverse participants, work examining skin-tone rendering in AR, computer vision algorithms for differently-abled hands, testing of accessibility and usability of a VR app for those with different sensory abilities, etc. Further, we anticipate papers that feature research explicitly regarding the realm of diversity in XR. Position papers informed by either survey of the literature or profound experience in the facets of this workshop are also well within our scope. The workshop brings disparate perspectives and research foci together under a shared goal to be inclusive, diverse, equitable, accessible, transparent, and ethical in XR. This goal can be shared by software, hardware, and human-focused researchers.