Congratulations Eugenia Rho, Brendan David-John and Bo Ji on receiving CHCI Planning Grants!

May 22, 2023

Eugenia Rho’s project (with Angela Scarpa) titled, “EmotionALze - Empathy-Driven Interactive Human-AI System for Countering Negative-Self Talk for Autistic Individuals” and Brendan David-John and Bo Ji’s project titled, “Understanding the long-term impact and perceptions of privacy-enhancing technologies for bystander obscuration in wearable AR displays” both received a CHCI Planning Grant.

CHCI Planning Grant

The Center for Human-Computer Interaction (CHCI) invited proposals for internal planning grants leading to large-scale externally funded research efforts on any topic related to HCI. The goal of this program is to identify and support interdisciplinary teams of researchers in convergent research areas to prepare for submission of large external grant proposals. In this context, “large” grants are defined as externally funded projects with a total budget of $2M or more. A CHCI planning grant will support new or existing teams to perform activities, such as team building and project scoping, that are necessary to enable the submission of a competitive large proposal in the future. This internal planning grant should lead directly to an external proposal submission as a next step, but the external submission may be a stepping stone to the envisioned large grant (i.e., an external planning grant or small grant).

EmotionALze - Empathy-Driven Interactive Human-AI System for Countering Negative-Self Talk for Autistic Individuals

Eugenia Rho and Angela Scarpa

Big Idea and motivation

Our definition of Big Idea is to build safe, transparent, and inclusive human-AI interaction tools powered by generative models in the context of addressing mental health challenges faced by neurodiverse populations.Generative language models (LMs), such as the recent Generative Pre-trained Transformers (GPT) offer tremendous potential to revolutionize how we support the mental wellbeing and communication of neurodiverse individuals, including those on the autism spectrum.

Autistic people often struggle with communication and may experience stressful social interactions that can lead to higher rates of depression and anxiety. In fact, co-occurring mental health conditions are common across autistic populations. Such individuals may engage in negative self-talk (NST) that can exacerbate these mental health issues. Traditional therapy may not be effective for those with limited verbal communication skills. Thus, there is a need for a tool that can help autistic individuals to self-encourage and support their mental wellbeing. To address this, we propose to develop EmotionAIze, an interactive positive affirmation tool powered by GPT-4 that generates contextualized, tone-adaptive empathy-based counter responses to NST expressed as multimodal inputs (text, audio, images, videos, tactile interactions with sensor-connected objects) from the user. The development and evaluation of EmotionAIze will enable us to tackle three key challenges as part of our Big Idea vision development, specifically:

- Challenge 1: Build Inclusive & Explainable Human-AI Interactions for Mental Health.

- Challenge 2: Incorporate Neurodiverse Communication Patterns in Training Datasets.

- Challenge 3: Develop Inclusive Evaluation Frameworks for Human-AI Tools for Neurodiverse Users.

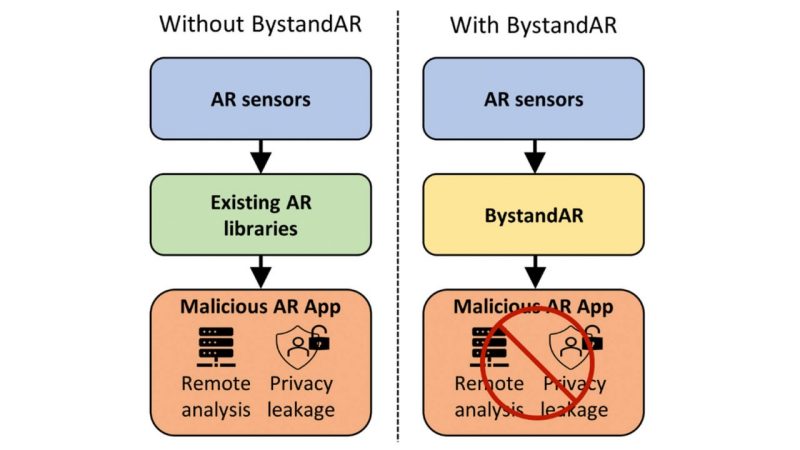

Understanding the long-term impact and perceptions of privacy-enhancing technologies for bystander obscuration in wearable AR displays

Brendan David-John (PI) and Bo Ji (Co-PI)

External Collaborators: Dr. Evan Selinger, Philosophy (RIT), Dr. Jiacheng Shang (Montclair State), Dr. Y. Charlie Hu (Purdue)

Big idea and motivation

Wearable Augmented Reality (AR) devices are set apart from other mobile devices by the immersive experience they offer. While the powerful suite of sensors on modern AR devices are necessary for enabling such an immersive experience, they can create unease in bystanders due to privacy concerns. A primary source of concern is related to the risk of identification and surveillance from recent advancements in facial recognition.

Our big idea is to use privacy-enhancing technologies (PETs) to protect bystander privacy while enabling the future of AR. Several long-term research questions must be answered before our goal can be achieved: (RQ1) how does the public perceive AR PETs and how will they be deployed in practice? (RQ2) in what settings or scenarios are PETs a solution to bystander privacy? (RQ3) how can we avoid jeopardizing privacy in the long run, particularly if PETs normalize wearable AR in public but are limited to opt-in systems?

.jpg.transform/m-medium/image.jpg)