Doug Bowman, Joe Gabbard, Nazila Roofigari-Esfahan and colleagues receive CHCI Planning Grant

January 26, 2022

Doug A. Bowman (Computer Science, Director CHCI), Joe Gabbard (Industrial and Systems Engineering, member CHCI), and Nazila Roofigari-Esfahan (Building Construction, member CHCI), in collaboration with colleagues Hoda Eldardiry (Computer Science), and Jia-Bin Huang (Electrical and Computer Engineering) received a CHCI planning grant for their project, “Intelligent Augmented Reality (AR) for the Future of Work”. The goal of the CHCI planning grant program is to identify and support interdisciplinary teams of researchers in convergent research areas to prepare for submission of large external proposals.

The research team is conducting planning activities and preliminary research with a view to large-scale funding in the area of “Intelligent Augmented Reality Systems,” which addresses the convergence of AR and Artificial Intelligence. The team’s primary target is the NSF Future of Work at the Human-Technology Frontier program.

The research team will present their project at the ICAT Playdate on February 4th at 8:30AM.

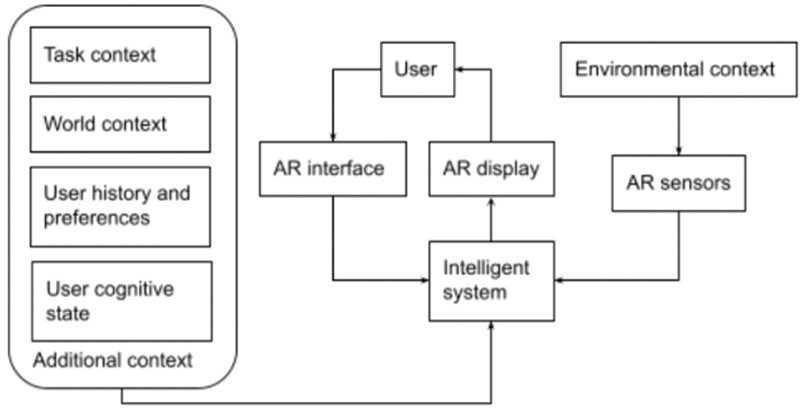

The research team envisions intelligent AR systems that can detect, understand, and reason about their context of use, and that can adapt their displays and interactions based on that context. “Context” refers not only to the environment and objects around the user, but also knowledge about the task, the world, and the user themselves—their history, preferences, and cognitive state (e.g., workload, fatigue). The figure below illustrates the intelligent AR concept.

Scenarios of use for intelligent AR in the workplace include:

- A construction manager wears AR glasses at the worksite. The system senses what areas of the site are being viewed and automatically loads relevant construction documents, displaying them as small, transparent thumbnails in the periphery of the display. The manager can view them at full size by fixating on them briefly. When the manager begins to walk across the site, documents minimize to allow unobstructed views of the real world. Moving equipment is sensed by the system and the user is notified of hazards nearby.

- An office worker uses AR glasses to simulate a large multi-monitor display when seated at his desk. When a co-worker arrives and starts a conversation, parts of the visual display fade so they can see each other clearly. As they leave to go to a meeting, the windows, documents, and tabs relevant to the meeting’s topic detach from the desktop and follow the worker into the conference room.

To realize this vision, research is needed in a number of convergent areas:

- Computer vision: How can we understand dense, dynamic 3D scenes in real time, at a semantic level?

- Machine learning: How can we predict, reliably and in real time, what information users need, what task(s) users are performing, and how the interface needs to adapt based on data from multiple contextual sources?

- AR information display: How can we design adaptive information displays that are salient and intuitively accessible, but also unobtrusive?

- AR interaction techniques: How can we allow users to easily access information, move or hide content, or otherwise refine what is displayed in AR when the intelligent system’s predictions are imperfect?

- AI-enhanced user interfaces: How should AI predictions be presented, and should the system be more like an intelligent agent or a traditional UI with embedded suggestions?

- AR in specific workplace domains: How can intelligent AR systems be exploited to enhance productivity and safety in a complex domain such as construction?

The Research Team:

The interdisciplinary team is rooted in the CHCI Immersive Experiences group, one of the foremost university research groups on VR/AR in the country, with wide-ranging expertise and collective infrastructure that can be leveraged for complex projects in intelligent AR. They also draw from the Sanghani Center for Artificial Intelligence and Data Analytics, which has an excellent reputation and history of human-centered AI research.

Doug A. Bowman is a Professor of Computer Science and Director of the Center for HCI, working on user experience with immersive technologies. His 3D Interaction Group is focusing on designing future AR interfaces for high-quality, always-on AR glasses. They have explored the use of virtual monitors and virtual windows for productivity work, both in stationary settings and on the go. Their glanceable AR approach, funded by a Google grant, displays virtual information in the periphery so that it’s out of the way but also accessible at a glance, and has shown that this approach can support information access in the context of other real-world tasks in both lab-based and authentic use studie. Bowman’s group has also explored adaptive AR displays that move with the user, attach to nearby surfaces, and automatically resolve occlusion of real-world objects. One of his current intelligent AR projects is investigating whether conversations between the AR user and others can be reliably detected, so that AR information can be moved out of the way, and only information relevant to the conversation remains visible.

Joseph L. Gabbard is an Associate Professor of Industrial and Systems Engineering and a CHCI member. His work centers on perceptual and cognitive issues related to user experience and usability engineering of AR interfaces. His research on perceptual challenges in AR aims to understand and design for human visual perception challenges and limitations, in areas such as depth and color perception, visual search, and context switching. He also has deep expertise in usability methods applied to AR, including evaluation of user attention and cognitive workload in AR dual-task scenarios, which are critical issues for the intelligent, adaptive AR we propose. He has designed AR systems for work in many domains, including construction and civil infrastructure inspection, and has developed adaptive AR interfaces as well. Gabbard and Bowman have worked together on a variety of projects for many years.

Jia-Bin Huang is an Assistant Professor of Electrical and Computer Engineering. His research interests lie at the intersection of computer vision, computer graphics, and machine learning. The central goal of his research is to build intelligent machines that can understand and recreate the visual world around us. In particular, he has worked on learning from videos, understanding the 3D structure of scenes from 2D imagery, and understanding human activity in videos, all topics that are essential for detecting the context of an AR user to enable intelligent predictions and assistance. He works specifically on real-time CV algorithms for dense and dynamic environments.

Hoda Eldardiry is an Associate Professor in Computer Science. Her research is in Artificial Intelligence and Machine Learning, and focuses on building human-machine collaborative AI systems that can learn context-aware and explainable models from multi-source and interconnected data. In intelligent AR, these sources include vision, but also other sensor data, background knowledge, task knowledge, and user knowledge. In addition, her prior work includes projects on Augmented Reality personalized and adaptive learning, and training for learning in Augmented Reality settings.

Nazila Roofigari-Esfahan is an assistant professor of Building Construction and a CHCI member. Her research is geared toward improving worker safety and productivity in construction worksites. She is interested in the application of human-centered design and smart systems, including intelligent AR, for improving safety, knowledge management and real-time decision making in construction. She has worked with Bowman on designing AR workstations at worksites.